Political (Dis)trust of Science All Around the World

Plus literally the most cursed data visualization I've ever seen.

Hello Friends—Happy Friday! Welcome to another edition of Pulse of the Polis.

Today, we’re going to talk about a couple of new studies looking at global (mis)trust of science and scientists—as well as the ways that political identity can come into play.

In 2023, Pew Research published a study1 that looked at US adults’ trust in scientists to “act in the best interest of the public”. Trust in this group has eroded since January of 2019, largely driven by increased skepticism by Republicans and Republican-leaners post-Covid. Democrats, for their part, had a boost in trust during the early part of the pandemic but have returned to about where they started; though may be a little less trusting than they were at the start.

Even pre-Covid, however, Democrats were generally more confident in science than Republicans in the US from (at least) 2008 onwards according to this Associated Press investigation using General Social Survey data. But, unfortunately, distrust in science (and scientists) is not a uniquely American phenomenon—nor are the political gaps in trust.

I recently came across two new papers that looked at this topic: One that was recently published in Science Communication and a pre-print currently under review (presumably at a different journal). The first focuses on one plausible mechanism for this distrust: stereotypes of scientists’ political affiliations. People often assume that scientists are politically liberal, which may drive distrust towards them. The second looks at levels of trust in scientists around the world and investigates whether the effect of respondents’ political leanings change depending on what countries you’re talking about. For example, are there countries where perceptions of scientists aren’t politicized at all, or even where conservatives are more trusting of science?

We’ll talk about those and a few other nuggets that caught my eye this week.

Explaining Polarized Trust in Scientists: A Political Stereotype-Approach | Science Communication

This research used a survey experiment, a set of surveys, and an original analysis of Twitter data to make the argument that a large part of the distrust in scientists comes down to the fact that many people stereotype scientists as politically liberal. In the first study, researchers provided 200 respondents a random vignette characterizing a hypothetical scientific research institute as either being politically liberal or conservative. They then asked respondents the extent that they trusted the findings of this organization. They found that political Liberals more strongly trusted liberal scientific institutions and distrusted conservative institutions. Conversely, conservatives trusted hypothetical conservative institutions while distrusting liberal ones.

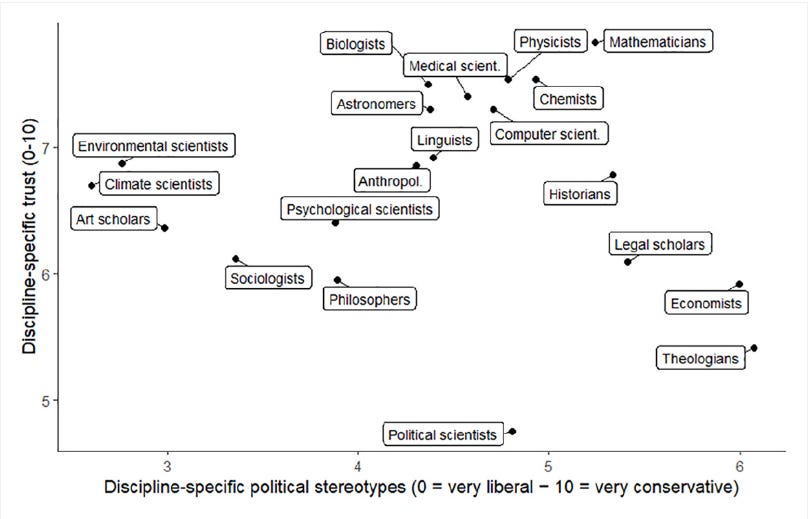

A series of surveys followed which showed that people generally saw scientists of various scientific disciplines as politically liberal. Some disciplines (such as environmental science) are seen as highly liberal while others (such as theologians) are seen as either moderate or moderately conservative. However, no scientific discipline tested had an average score that placed the typical perception squarely into the conservative camp. Though there was no experimental manipulation here, participants were more likely to report trusting scientists working in disciplines that they perceived as being more congruent to their own political ideologies. This was partially replicated in a German survey sample as well, showing that the tendency for some scientific enterprises to be seen as more “liberal” than others (and for trust to vary based upon people’s proximity to these preconceptions) translates outside of the US. A follow-up analysis of these survey data showed that the liberal-stereotyped Sociologists had higher trust among Liberals (and lower trust among Conservatives) than the more moderately-perceived Economics2.

Finally, the researchers investigated whether this stereotyping and trust carried over into who people followed on Twitter. They analyzed the people who followed either the GOP’s Twitter account or the Democrats’ Twitter account (ignoring weirdos like me who follow both to stay informed) and then looked to see if these individuals were more/less likely to follow particular high-profile sociologists or economists. (Researchers chose individuals who did not signal their political affiliation in their biography). They found that followers of the GOP on Twitter were far less likely to follow the sociologists than followers of the Democrats. Though, in a surprise to no one, both were far more likely to follow economists; sociologists don’t get anywhere near as much love. We would expect this sort of pattern if Republicans (and Democrats) were imputing partisanship onto sociologists; in-sorting suggests that people would only tend to follow those who they agree with. (At least on average. Again, there are people who follow those they disagree with to stay abreast of things, but they are not reflective of the typical Twitter user).

One of the things I like about this paper is that it’s “obvious” in all the right ways. To clarify, while anonymous reviewers often use “obvious” as a pejorative cudgel for scientific research, I don’t. I love obvious research—especially when it’s as well executed as this3. Though I find some of the prior theorizing on moral foundations and other reasons why “conservatives” may not like science to be fascinating, I can tell you that, when reading/listening to conservatives talk about science skeptically, it’s often clear that there is some strong partisan assumptions going on. I’d bet a buck that a text analysis would find “liberal” or something semantically similar is frequently very nearby “scientist” or “researcher” in the text. So kudos to them for explicating on this and providing it such thoughtful treatment.

To me, the first three studies are the strongest of the bunch. The experiment provides evidence for a causal relationship in the US, and the surveys to follow showed that the public do think of different scientific disciplines as having differing political leans—with trust appearing to follow how that perceived lean relates to the respondents’ own predilections. AND then they showed that latter bit in a whole other political context: Germany during Covid-19. I find the other elements useful too, though I’ll confess that I think “Twitter following” is a fairly weak proxy for “trust”—even if Twitter wasn’t filled by (relative) weirdos compared to the US general population, the kind of “trust” that’s exhibited by following a particular social media account is conceptually different than the more generalized social trust that the paper focuses on. (Though, undoubtedly, the latter is predictive of the former; it’s just not the cleanest operationalization). Still, kudos for thinking about what their theory implies about reality and then searching for independent validation of that anticipated relationship. I think it makes their overall argument all the stronger.

Another major thing I like about this is that it follows what I’m coming to realize is my favorite pattern of scientific inference: Establish that the causal relationship can be identified in a more “sterile” laboratory setting, demonstrate that evidence for it can be discerned in the messiness that is real life where loads of simultaneous influences are happening all at once, and find broader descriptive evidence of it happening and its implications. The presentation order of the studies can vary, I just like when the weaknesses of one mode of inquiry are covered by another and you still find similar things. You could ground this sort of thing up and replace my coffee with it; I get super energized by research like this.

I find the experiment featured in the first study to be super clever (though I do have a couple of methodological quibbles about the specifics). One thing I wish, though, is that they repeated the experiment again two more times. Once more in the US to validate the existence of the causal effect and then once in Germany to show that the causal relationship exists there too. It’s possible that the substantively similar inferential relationships between the two countries share different causal wellsprings. It’s less than I think that political stereotypes doesn’t matter at all in Germany, but it’s possible that this relationship matters to a different degree. But, obviously, money doesn’t grow on trees. Fielding survey experiments can be expensive. This is less a “this should’ve been done” statement and more of a “I wish this had happened. Oh well. An obvious opportunity for a follow-up!”

In a similar vein, there was something about their Twitter analysis that inspired a follow-up thought: As part of their research design, they didn’t consider scientists who publicly demonstrated a political leaning in their profiles. This is definitely an understandable design decision given their aims. But I wonder if, say, Conservatives were more likely to follow a sociologist who explicitly identified as a conservative (or otherwise strongly signaled Conservative in-group membership). That seems like a logical extension of their theory (insofar that, again, it maps onto Twitter follows). If a Conservative’s beef with sociologists is that they’re liberal, they may be disproportionately more likely to follow a sociologist with conservative bona fides than they are to follow sociologists generally—and, similarly, maybe Liberals are less likely to follow such people as they are to follow otherwise politically unidentified sociologists because they explicitly signal out-group membership that runs contrary to their presumed membership. Likewise, if Liberals are intuiting in-group membership among sociologists, we might not see any substantive difference in following patterns among sociologists who signal liberal political commitments. Especially among more politically knowledgeable and engaged liberals, who presumably are more likely to engage in partisan stereotyping.

Hopefully some follow-up work is done on these particularities. But, for now, let’s go ahead and expand our scope from “Mostly the US (and a bit of Germany)” to “67 of the Earth’s most populous countries.”

Trust in scientists and their role in society across 67 countries | Preprint

This paper is the result of a collaboration between the corresponding author (Viktoria Cologna) and over 200 other collaborators. These collaborators worked out of the Many Labs project to cover over 71,400 respondents in 67 countries fielded from November 2022 through August 2023. The core aims of the research was to see the extent that trust in scientists varied across countries as well as how antecedents of said trust (i.e,. religiosity, political identity, education) and downstream effects (i.e., scientists’ place in society).

Using a trust-in-science scale that was translated and explained across all country contexts, the researchers find that trust in science does indeed substantively vary across different national contexts. However, most respondents tend to be more trusting of science than not, scoring above 3 on average on their 5 point scale (with 1 being “very low” trust, 3 being “neither high or low” trust, and 5 being very high trust). Similar to the study above, they find that what we in the US would identify as “liberal” political ideological placement is typically positively correlated with trust in scientists, though there are places where this relationship appears different and, they argue, fully reversed. They also find that religiosity is positively associated with trust in scientists4 , as well as post-secondary education, age, and income. Additionally, endorsing “scientific populist” attitudes (“This is all so simple, the eggheads are overcomplicating things”) is negatively associated with trust in scientists. They also tend to find that the surveyed populace broadly agree rather than disagree that scientists should “work closely with politicians to integrate scientific results into policy making” (54% agree vs 20% disagree) and that “scientists should communicate about science with the general public” (83% agree vs 4% disagree).

To put it explicitly: Just like the first paper, this found a relationship where Liberal ideological self-placement is associated with greater trust in scientists. Where this compliments the former is that it finds this relationship while considering 65 additional countries and finds that this relationship is the prevailing average. However, that average masks a fair amount of country-specific context and differences in the strength of that relationship.

I think, in general, this is a phenomenal undertaking and a brilliant piece of descriptive research. There’s so much research done on trust in “Western” countries and comparatively far less done on trust in other contexts. Plus, by using the same (translated) scale, they could actually compare various country’s positions in a more apples-to-apples way5. One of the big things I like about this paper is that they explicitly incorporate the idea that different countries can have different baseline levels of trust and that the antecedents to scientific trust may exhibit contextually modified relationships. So much of science—social science especially—hinges upon the specifics of the context. Its great to see that not only respected but be one of the principle topics of interest!

There are three things that I would personally suggest as areas of improvement/clarification for this article. First, relates to their finding that the relationship between political ideology and trust varies across countries. The way that they present this is claiming that there are some countries where the relationship between political-leaning and trust are null and others where its actually reversed. However, it is unclear if their model actually shows that the liberal → trust relationship is fully reversed or if there are countries where it is substantially weaker6. I fully believe that there are places where this core relationship is stronger or weaker while still being present—but I tend to be more skeptical that there are so many places where this functional relationship is fully reversed. I could be wrong though; it would’ve been nice to be able to inspect the code and/or for the language in the article to have been a bit more clear on this.

The second and third thing have the same solution so I’ll address them both at once:

They model the relationship between political ideology and their 5-point “trust in scientist scale” as if it were a fully linear scale where people could, in theory, select values like 3.6, 1.0, and 4.2, rather than responding to whichever text description best matches their attitudes that researchers later assigned a numerical value.

They acknowledge difficult model fits preventing them from pursuing the full model specification that they originally intended. From personal experience, I suspect that it’s a consequence of software packages for multilevel models as they used.

For the second bullet: I have personal experience with that package. I love it to pieces but it can only be so helpful when you expect super complicated data generating processes. And I suspect that the author(s) knew about the first bullet—it’s a super common thing in the Social Sciences. The solution to it is called an “ordinal” or “cumulative logistic” regression. Unfortunately, given that their observations are nested within countries, they need to approach it with the general family of models that they used (called “multilevel”7) and there aren’t many accessible pieces of statistical software that will let them do both. And, if given the choice, I’d also do exactly what they did and prioritize the multilevel structure of the data.

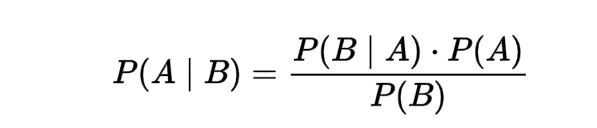

But you don’t have to choose! To that end: May I have a moment to talk about our Lord and Savior: Bayesian Modeling.

Multilevel ordinal regression is entirely possible in the Bayesian framework. (I was literally doing it myself earlier this week using the incredible {brms} package in R). It may take a bit (ok, a lot) more time for a model to converge than what they got using their current package, but that time can be sped up pretty dramatically with a few modest specifications. And it’s possible to address the first point as well; a well-specified Bayesian model can overcome the lack of convergence seen in the default paradigm’s analogues.

In short, I think that this paper can really improve from a Bayesian treatment. (If, somehow, an author is reading this and wants a few pointers—-please drop a comment so we can get in touch! Or anyone really. I’m generally really happy to help with a few pointers or officially consult on this stuff depending on the level of involvement). But I think that this is in general super interesting work that furthers our understanding of global trust of scientists and how these perceptions affect people’s beliefs in the role of scientists in society.

Some additional nuggets before you go.

This super interesting data visualization looks at the cost of every Big Mac at every McDonald’s in the United States. Though Mickey-D’s works like hell to have things taste the same no matter where you go, they sure don’t cost the same. The Big Mac Purchasing Power Parity Index appears to be just as useful on a subnational level as it is on an international level.

The R package researchers used above for the multilevel modeling has an interesting data set called the “cake” dataset which contains “data on the breakage angle of chocolate cakes made with three different recipes and baked at six different temperatures…presented in Cook (1938).” This incredible blog post is an account of trying to track down the original Cook 1938 article—the provenance of this ostensibly inconspicuous dataset that’s helped loads of people understand some of the hardest concepts in applied statistics.

This post by Dannagal Young compares and contrasts her book “Wrong” with Yascha Mounk’s “The Identity Trap.” I’m personally a big fan of Young’s work and find the description of both sets of research really illuminating.

If I’m forced to see this cursed data visualization, then, by God, you’re gonna be forced to see it too.

Pew is, like, the social sciences equivalent of “The Simpsons have already done it.” Except, honestly, it’s really hard to top Pew. The Simpsons however…

Economics is kinda funny in that, depending on who you ask, the practitioners of the dismal science are either some of the most conservative or most liberal. They are either apologists for the worst of capitalism or subversive socialists hell bent on dismantling all the things that Americans hold dear like apple pie and free on-demand parking. That’s my own observation at least, it’s not clear from the data. So I wouldn’t be surprised if this “moderation” comes from widespread ambivalence.

First off, testing “obvious” things is unsexy yet necessary in the same way that maintaining existing infrastructure versus building new shit. I would very much prefer that our knowledge of the world is as thoroughly vetted, and that it coheres with as much preexisting knowledge, as possible. Second, obvious That something is “obvious” means that the scientists were aptly tuned-in to reality and wrote their theory and explanation in an intuitive way. Both of those things are hard to do!

Though, here too, there are contexts where this relationship is moderated or even reversed. The authors argue that there are contexts where faith is not seen as being in conflict with science.

Though, as often happens with translated surveys, their instrument doesn’t exhibit full measurement invariance across all contexts. So it’s, like, Gala Apples to Fuji Apples (worst case Apples to Pears), but it’s not like it’s Apples to Carrots.

For the technically minded: It appears that they may have presented the random slopes (or some standardized version of the random slopes) that their model estimated. However, these should best be thought of, in this context, as a country-specific moderator of the base relationship. So if the US has a random effect of -3 and the base coefficient is -5, the actual coefficient for the US would be -8. If Mexico’s random slope is 0, then its coefficient is -5. If Malaysia has a random coefficient of +3, then their actual coefficient would be -2. If Egypt had a random coefficient of +7, then their actual coefficient would be +2. This means that Malaysia’s overall relationship between political ideology and trust would still be negative—as would Mexico’s—despite the positive modifier/null modifier, respectively. Only in Egypt, then, would the relationship actually be reversed. The others would still have the same substantive relationship, just the US’s would have a stronger one than the base, Mexico’s would be identical to the base, and Malaysia’s would be weaker than the base. There are different ways to extract this information; they could have extracted and presented the full coefficient or simply the moderator (random slope). Unfortunately, without the code (which they have not published as of writing), it is not possible to validate. To be clear: this is not an accusation of deception or ignorance on the part of the researchers—this stuff is incredibly difficult and this would be a common mistake. Even the best make coding errors; Lord knows it’s happened to me!

“Multilevel” in the sense that individuals are one level with nations being an additional level where the nations themselves (through culture and individual histories as well as different sampling procedures) could have their own effects on the outcomes and relationships. There are, unfortunately, way too many definitions of “multilevel” used across science though.

Hi Peter. Interesting article. Thanks. I have a quick question about the ordinal regression that you suggested for the TISP paper. For trust in scientists, there were 12 items and the dv would be a mean score of all those 12 items. Does it still make sense to consider that "ordinal"? In most of the tutorial explaining ordinal regression, the dv is a single item. And also, how do you interpret the coeff in these models? I mean, what is the scale? probit? Do you transform it to percentages or do you report it as it is? Thanks.